The new king of Infrastructure as Code (IaC)

Monitoring your DL models while in production. How to build a scalable data collection pipeline

Decoding ML Notes

This week’s topics:

The new king of Infrastructure as Code (IaC)

How to build a scalable data collection pipeline

Monitoring your DL models while in production

The new king of Infrastructure as Code (IaC)

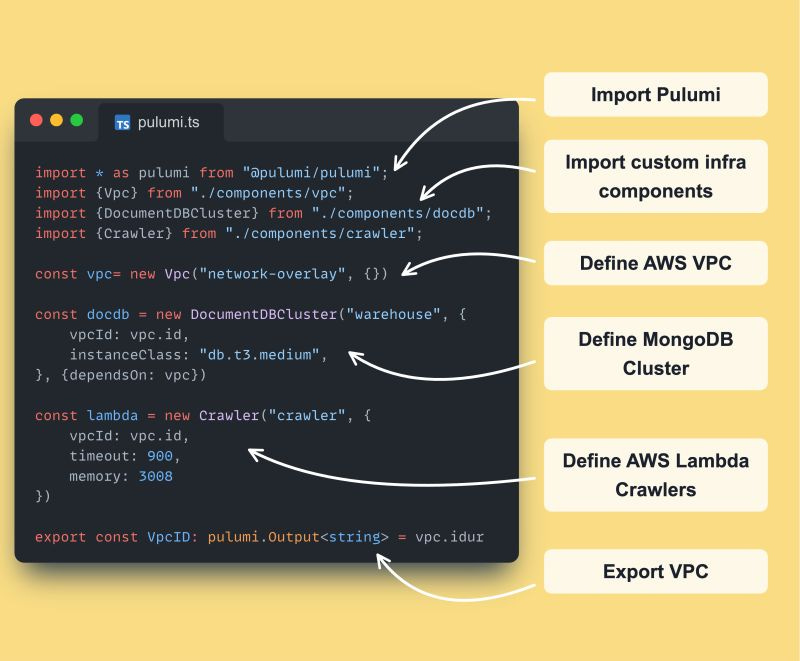

This is 𝘁𝗵𝗲 𝗻𝗲𝘄 𝗸𝗶𝗻𝗴 𝗼𝗳 𝗜𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲 𝗮𝘀 𝗖𝗼𝗱𝗲 (𝗜𝗮𝗖). Here is 𝘄𝗵𝘆 it is 𝗯𝗲𝘁𝘁𝗲𝗿 than 𝗧𝗲𝗿𝗿𝗮𝗳𝗼𝗿𝗺 or 𝗖𝗗𝗞 ↓

→ I am talking about Pulumi ←

Let's see what is made of

↓↓↓

𝗪𝗵𝗮𝘁 𝗶𝘀 𝗣𝘂𝗹𝘂𝗺𝗶 𝗮𝗻𝗱 𝗵𝗼𝘄 𝗶𝘀 𝗶𝘁 𝗱𝗶𝗳𝗳𝗲𝗿𝗲𝗻𝘁?

Unlike other IaC tools that use YAML, JSON, or a Domain-Specific Language (DSL), Pulumi lets you write code in languages like Python, TypeScript, Node.js, etc.

- This enables you to leverage existing programming knowledge and tooling for IaC tasks.

- Pulumi integrates with familiar testing libraries for unit and integration testing of your infrastructure code.

- It integrates with most cloud providers (AWS, GCP, Azure, Oracle, etc.)

𝗕𝗲𝗻𝗲𝗳𝗶𝘁𝘀 𝗼𝗳 𝘂𝘀𝗶𝗻𝗴 𝗣𝘂𝗹𝘂𝗺𝗶:

𝗙𝗹𝗲𝘅𝗶𝗯𝗶𝗹𝗶𝘁𝘆: Use your preferred programming language for IaC + it works for most clouds out there

𝗘𝗳𝗳𝗶𝗰𝗶𝗲𝗻𝗰𝘆: Leverage existing programming skills and tooling.

𝗧𝗲𝘀𝘁𝗮𝗯𝗶𝗹𝗶𝘁𝘆: Write unit and integration tests for your infrastructure code.

𝗖𝗼𝗹𝗹𝗮𝗯𝗼𝗿𝗮𝘁𝗶𝗼𝗻: Enables Dev and Ops to work together using the same language.

If you disagree, try to apply OOP or logic (if, for statements) to Terraform HCL's syntax.

It works, but it quickly becomes a living hell.

𝗛𝗼𝘄 𝗣𝘂𝗹𝘂𝗺𝗶 𝘄𝗼𝗿𝗸𝘀:

- Pulumi uses a declarative approach. You define the desired state of your infrastructure.

- It manages the state of your infrastructure using a state file.

- When changes are made to the code, Pulumi compares the desired state with the current state and creates a plan to achieve the desired state.

- The plan shows what resources will be created, updated, or deleted.

- You can review and confirm the plan before Pulumi executes it.

→ It works similarly to Terraform but with all the benefits your favorite programming language and existing tooling provides

→ It works similar to CDK, but faster and for your favorite cloud infrastructure (not only AWS)

What do you think? Have you used Pulumi?

We started using it for the LLM Twin course, and so far, we love it! I will probably wholly migrate from Terraform to Pulumi in future projects.

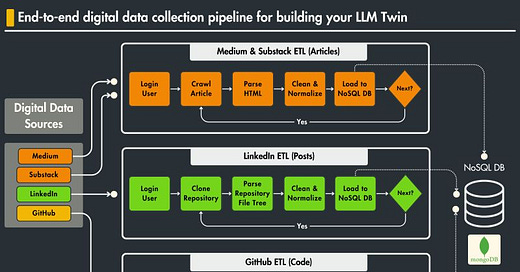

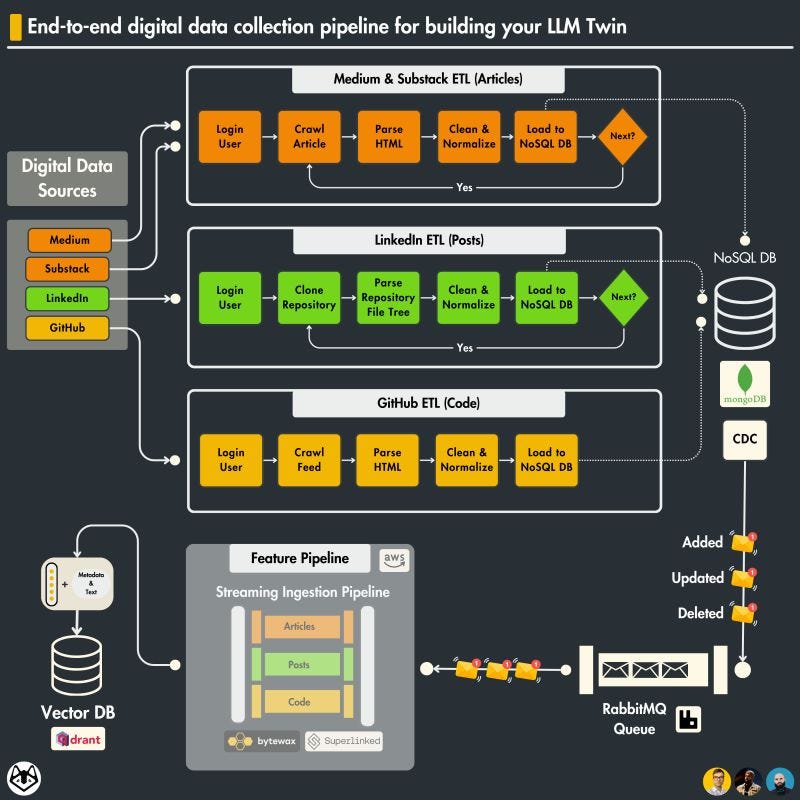

How to build a scalable data collection pipeline

𝗕𝘂𝗶𝗹𝗱, 𝗱𝗲𝗽𝗹𝗼𝘆 to 𝗔𝗪𝗦, 𝗜𝗮𝗖, and 𝗖𝗜/𝗖𝗗 for a 𝗱𝗮𝘁𝗮 𝗰𝗼𝗹𝗹𝗲𝗰𝘁𝗶𝗼𝗻 𝗽𝗶𝗽𝗲𝗹𝗶𝗻𝗲 that 𝗰𝗿𝗮𝘄𝗹𝘀 your 𝗱𝗶𝗴𝗶𝘁𝗮𝗹 𝗱𝗮𝘁𝗮 → 𝗪𝗵𝗮𝘁 do you need 🤔

𝗧𝗵𝗲 𝗲𝗻𝗱 𝗴𝗼𝗮𝗹?

𝘈 𝘴𝘤𝘢𝘭𝘢𝘣𝘭𝘦 𝘥𝘢𝘵𝘢 𝘱𝘪𝘱𝘦𝘭𝘪𝘯𝘦 𝘵𝘩𝘢𝘵 𝘤𝘳𝘢𝘸𝘭𝘴, 𝘤𝘰𝘭𝘭𝘦𝘤𝘵𝘴, 𝘢𝘯𝘥 𝘴𝘵𝘰𝘳𝘦𝘴 𝘢𝘭𝘭 𝘺𝘰𝘶𝘳 𝘥𝘪𝘨𝘪𝘵𝘢𝘭 𝘥𝘢𝘵𝘢 𝘧𝘳𝘰𝘮:

- LinkedIn

- Medium

- Substack

- Github

𝗧𝗼 𝗯𝘂𝗶𝗹𝗱 𝗶𝘁 - 𝗵𝗲𝗿𝗲 𝗶𝘀 𝘄𝗵𝗮𝘁 𝘆𝗼𝘂 𝗻𝗲𝗲𝗱 ↓

𝟭. 𝗦𝗲𝗹𝗲𝗻𝗶𝘂𝗺: a Python tool for automating web browsers. It’s used here to interact with web pages programmatically (like logging into LinkedIn, navigating through profiles, etc.)

𝟮. 𝗕𝗲𝗮𝘂𝘁𝗶𝗳𝘂𝗹𝗦𝗼𝘂𝗽: a Python library for parsing HTML and XML documents. It creates parse trees that help us extract the data quickly.

𝟯. 𝗠𝗼𝗻𝗴𝗼𝗗𝗕 (𝗼𝗿 𝗮𝗻𝘆 𝗼𝘁𝗵𝗲𝗿 𝗡𝗼𝗦𝗤𝗟 𝗗𝗕): a NoSQL database fits like a glove on our unstructured text data

𝟰. 𝗔𝗻 𝗢𝗗𝗠: a technique that maps between an object model in an application and a document database

𝟱. 𝗗𝗼𝗰𝗸𝗲𝗿 & 𝗔𝗪𝗦 𝗘𝗖𝗥: to deploy our code, we have to containerize it, build an image for every change of the main branch, and push it to AWS ECR

𝟲. 𝗔𝗪𝗦 𝗟𝗮𝗺𝗯𝗱𝗮: we will deploy our Docker image to AWS Lambda - a serverless computing service that allows you to run code without provisioning or managing servers. It executes your code only when needed and scales automatically, from a few daily requests to thousands per second

𝟳. 𝗣𝘂𝗹𝘂𝗺𝗻𝗶: IaC tool used to programmatically create the AWS infrastructure: MongoDB instance, ECR, Lambdas and the VPC

𝟴. 𝗚𝗶𝘁𝗛𝘂𝗯 𝗔𝗰𝘁𝗶𝗼𝗻𝘀: used to build our CI/CD pipeline - on any merged PR to the main branch, it will build & push a new Docker image and deploy it to the AWS Lambda service

𝘾𝙪𝙧𝙞𝙤𝙪𝙨 𝙝𝙤𝙬 𝙩𝙝𝙚𝙨𝙚 𝙩𝙤𝙤𝙡𝙨 𝙬𝙤𝙧𝙠 𝙩𝙤𝙜𝙚𝙩𝙝𝙚𝙧?

Then...

↓↓↓

Check out 𝗟𝗲𝘀𝘀𝗼𝗻 𝟮 from the FREE 𝗟𝗟𝗠 𝗧𝘄𝗶𝗻 𝗖𝗼𝘂𝗿𝘀𝗲 created by Decoding ML

...where we will walk you 𝘀𝘁𝗲𝗽-𝗯𝘆-𝘀𝘁𝗲𝗽 through the 𝗮𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲 and 𝗰𝗼𝗱𝗲 of the 𝗱𝗮𝘁𝗮 𝗽𝗶𝗽𝗲𝗹𝗶𝗻𝗲:🔗 The Importance of Data Pipelines in the Era of Generative AI

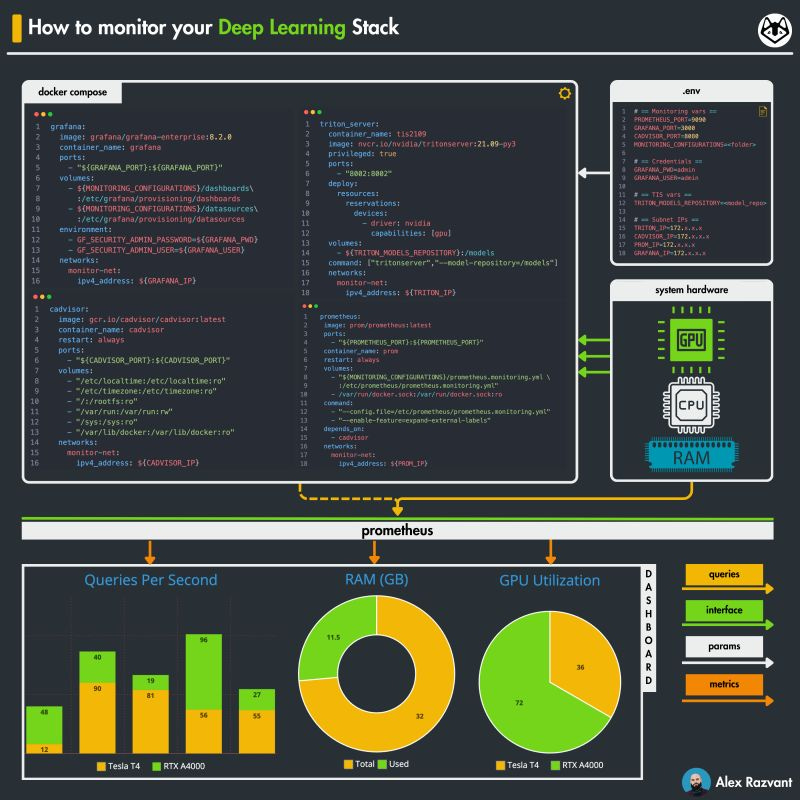

Monitoring your DL models while in production

𝗠𝗼𝗻𝗶𝘁𝗼𝗿𝗶𝗻𝗴 is 𝗧𝗛𝗘 𝗸𝗲𝘆 𝗠𝗟𝗢𝗽𝘀 𝗲𝗹𝗲𝗺𝗲𝗻𝘁 in ensuring your 𝗺𝗼𝗱𝗲𝗹𝘀 in 𝗽𝗿𝗼𝗱𝘂𝗰𝘁𝗶𝗼𝗻 are 𝗳𝗮𝗶𝗹-𝘀𝗮𝗳𝗲. Here is an 𝗮𝗿𝘁𝗶𝗰𝗹𝗲 on 𝗠𝗟 𝗺𝗼𝗻𝗶𝘁𝗼𝗿𝗶𝗻𝗴 using Triton, Prometheus and Grafana ↓

Within his article, he started with an example where, in one of his projects, a main processing task was supposed to take <5 𝘩𝘰𝘶𝘳𝘴, but while in production, it jumped to >8 𝘩𝘰𝘶𝘳𝘴.

→ 𝘛𝘩𝘪𝘴 (𝘰𝘳 𝘴𝘰𝘮𝘦𝘵𝘩𝘪𝘯𝘨 𝘴𝘪𝘮𝘪𝘭𝘢𝘳) 𝘸𝘪𝘭𝘭 𝘩𝘢𝘱𝘱𝘦𝘯 𝘵𝘰 𝘢𝘭𝘭 𝘰𝘧 𝘶𝘴.

Even to the greatest.

It's impossible always to anticipate everything that will happen in production (sometimes it is a waste of time even to try to).

That is why you always need eyes and years on your production ML system.

Otherwise, imagine how much $$$ or users he would have lost if he hadn't detected the ~3-4 hours loss in performance as fast as possible.

Afterward, he explained step-by-step how to use:

- 𝗰𝗔𝗱𝘃𝗶𝘀𝗼𝗿 to scrape RAM/CPU usage per container

- 𝗧𝗿𝗶𝘁𝗼𝗻 𝗜𝗻𝗳𝗲𝗿𝗲𝗻𝗰𝗲 𝗦𝗲𝗿𝘃𝗲𝗿 to serve ML models and yield GPU-specific metrics.

- 𝗣𝗿𝗼𝗺𝗲𝘁𝗵𝗲𝘂𝘀 to bind between the metrics generators and the consumer.

- 𝗚𝗿𝗮𝗳𝗮𝗻𝗮 to visualize the metrics

𝗖𝗵𝗲𝗰𝗸 𝗶𝘁 𝗼𝘂𝘁 𝗼𝗻 𝗗𝗲𝗰𝗼𝗱𝗶𝗻𝗴 𝗠𝗟

↓↓↓

🔗 How to ensure your models are fail-safe in production?

Images

If not otherwise stated, all images are created by the author.